At first glance, you may think this title is referring to northwestern US states like Oregon or Idaho. While there certainly are wealthy areas in the northwestern US, I am actually referring to which parts of a given city are wealthy.

After traveling across and living in multiple parts of the United States, I have noticed that cities tend to be wealthier on their northern halves. Until now, this was just conjecture but I took the opportunity to utilize publicly available census tract data to investigate my suspicions.

Building the Visual

First, I obtained data from various public data sources. This includes census tract shapefiles, income data, and census tract to county MSA conversions.

I then selected a range of MSAs to analyze. In all I looked at Atlanta, Austin, Boston, Chicago, Dallas, Denver, Houston, Indianapolis, Kansas City, Las Vegas, Los Angeles, Miami, Milwaukee, Minneapolis, Nashville, New Orleans, New York, Oklahoma City, Orlando, Philadelphia, Phoenix, Portland, Salt Lake City, San Antonio, San Francisco, Seattle, Tampa, and Washington DC.

From there, I standardized the latitude and longitude of each MSA such that the most southwestern point in an MSA would have a coordinate of (0,0) while the most northeastern point would have a coordinate of (1,1). This controls for physical size differences between MSAs.

Lastly, I scaled the income of each census tract such that the tract with the highest income in an MSA has an income value of 1 and the lowest income tract has a value of 0. This also controls for wealth differences between MSAs.

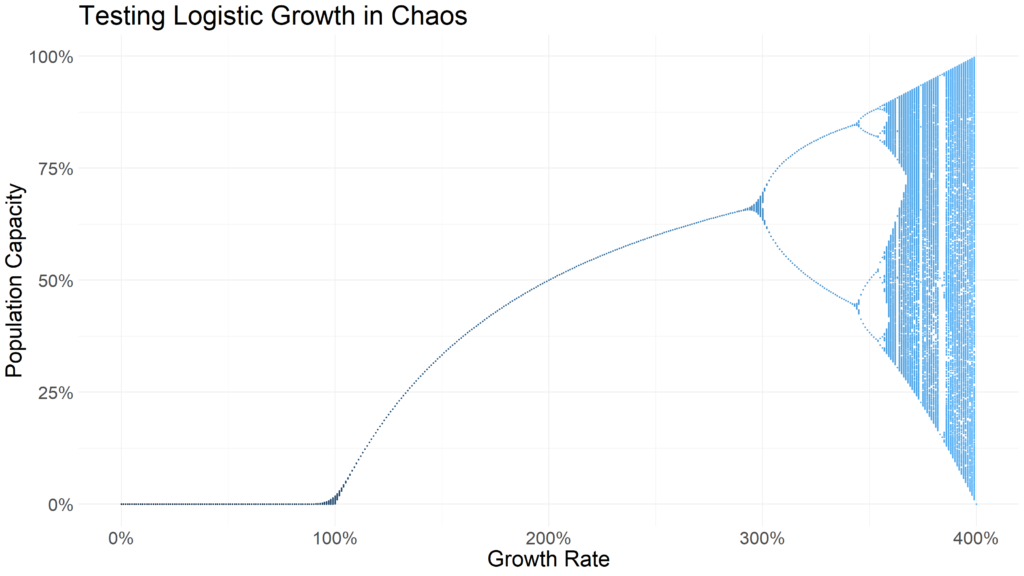

I used this dataset to layer all of the MSA data to create a supercity that represents all of the individual MSAs collectively.

And here is the result! The closer to gold a given tract is the higher its income. Conversely, the closer to dark blue a tract is the lower its income. The black dot represents the city center. I observe a fairly clear distinction between the northwest and southeast of US cities.

There are, of course, exceptions to the rule. We can see gold census tracts in the south of some MSAs though wealth generally appears to be concentrated in the northwest.

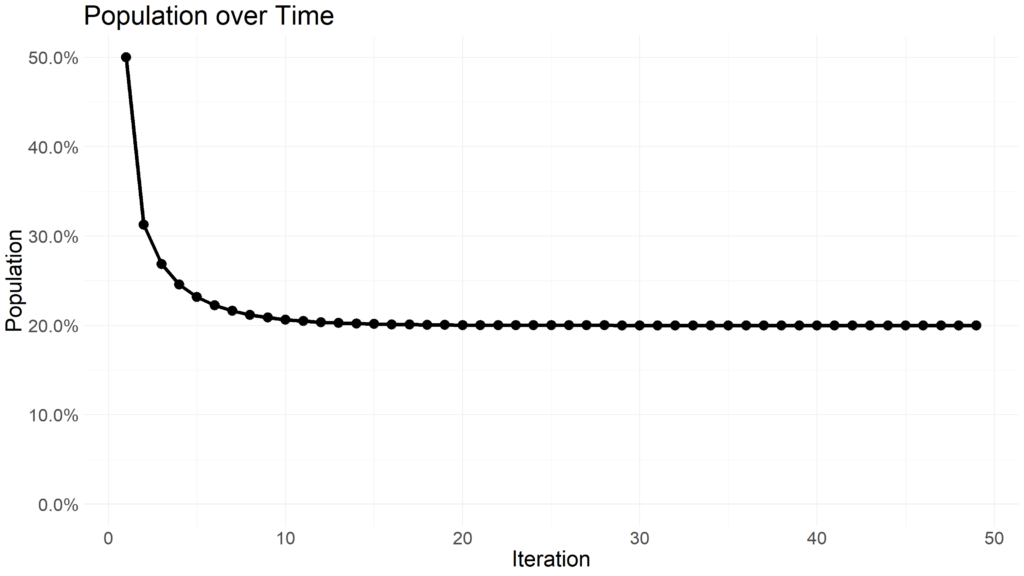

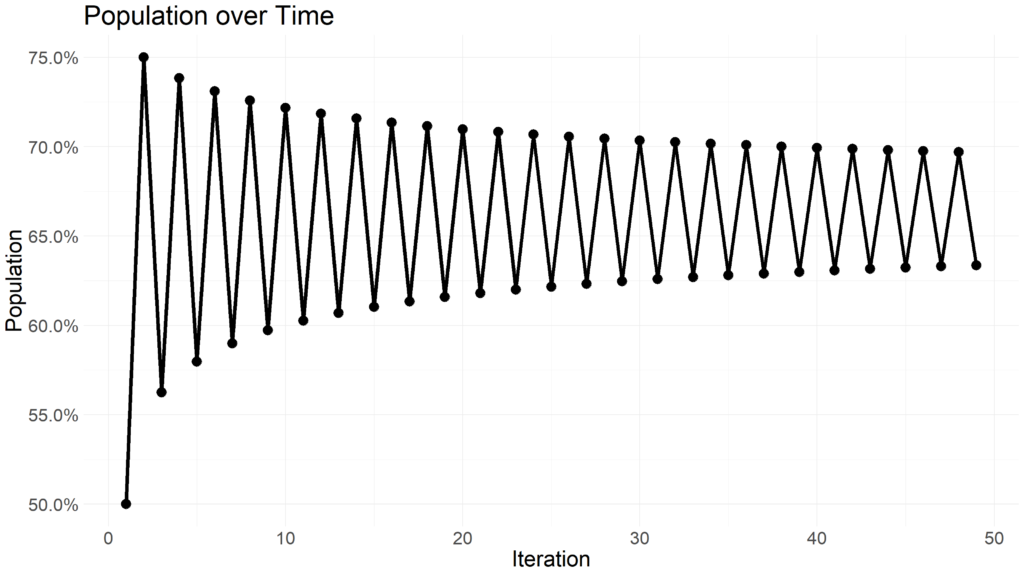

A Simple Explanatory Model

To add some validity to these findings I estimated a very simple linear model which estimates a census tract’s income using its relative position to the city center. Here are the results:

| Term | Coefficient (Converted to USD) |

| Intercept | $84,288 |

| Longitude (West/East) | -$6,963 |

| Latitude (North/South) | $7,674 |

The way to read these coefficients is as follows. At the city center census tracts have, on average, a median household income of $84,288. As you move east median household income falls (hence the negative coefficient for Longitude) and as you north income rises (hence the positive coefficient for Latitude).

In other words, northwestern tracts have median household incomes approximately $14,000 wealthier than the city center or $28,000 wealthier than their southeastern counterparts.

Obviously, this model is oversimplified and would not be a good predictor of tract income given the huge variety of incomes across MSAs in the US, but it does illustrate an interesting point about income vs. tract position in an MSA.

Closing Thoughts

Before closing out, I wanted to draw attention to a few specific MSAs where this effect is particularly pronounced. I would argue that this northwest vs southeast impact is pronounced in the following six cities, especially Washington DC.

I hope this high level summary provides some interesting food for thought about the differences in income across US cities.